This became very concrete in a recent webinar organised by The Hague Network, bringing together over 200 participants across Europe. The focus was not on technology itself, but on something far more fundamental: what generative AI actually does to teaching and assessment in higher education.

I contributed to the series by giving a presentation titled “Adapting Teaching and Learning Methods to the AI Era”. The results presented here were collected directly from participants through the interactive elements integrated into the webinar session. The discussion quickly moved beyond tools and technologies to a deeper question: what actually needs to change?

At the beginning of the session, participants were asked to describe “AI in education” in one word. The responses (N = 95) were revealing: The most common answer was challenge (46%). Other responses framed AI as an opportunity(22%), a tool (18%), or a risk or threat (14%). What is striking is not disagreement, but the absence of simple answers. AI is not seen as a solution. It is seen as something that forces us to rethink what we are doing.

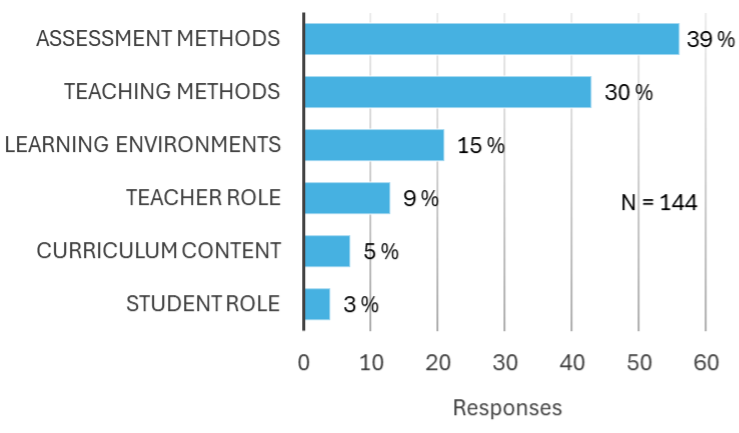

A second question deepened this picture: “What needs to change the most in education in the AI era?” Here the message was even more unambiguous. Assessment methods stood out clearly as the primary area for change (56 responses), followed by teaching methods (43) and learning environments (21). Curriculum content (7), teacher role (13) and student role (4) were mentioned far less frequently. The pattern is telling: the pressure is not primarily on what we teach, but on how we evaluate learning.

From a teacher’s perspective, this challenge is already very concrete. In my own engineering physics courses, it has become evident that unsupervised assignments no longer measure student understanding in a reliable way. If a student can generate a correct-looking solution using AI, what exactly are we assessing?

At the same time, the situation is not as simple as “AI is a problem”. The same tools can act as highly effective learning support. Used well, they function almost like a personal tutor: guiding step-by-step problem solving, offering explanations, and generating additional practice. As demonstrated in the session, AI can either enable shortcut-driven answer production or support an active learning process where the student constructs understanding. On one hand, AI makes it easier than ever to produce answers without understanding. On the other hand, it offers new ways to support deeper learning. The key question is no longer whether students use AI – they do – but how teaching and assessment should respond to that reality.

In practice, this has led to concrete changes. Unsupervised take-home exams and graded homework have lost much of their value as assessment tools. Instead, more emphasis is needed on supervised situations and tasks where thinking becomes visible. Measurement-based assignments, for example, require students to collect data, interpret results, and connect theory to real-world phenomena. These are much harder to outsource to AI and much closer to the kind of competence we actually want to assess.

AI in education is not primarily a technological issue. It is a pedagogical one. And perhaps that is why the dominant one-word answer was not “opportunity” or “threat”, but simply: “challenge”. Not because AI makes education worse, but because it forces us to ask what meaningful learning and assessment should look like in the first place.

Read more about the webinar series:

https://thehaguenetwork.org/news/03022026_AI_DIDACTICS

Writen by: Sami Suhonen, Principal Lecturer, Faculty of Pedagogical Innovations and Culture